Hundreds of thousands of companies are building AI applications. There are more than five million AI-related projects on GitHub alone. The AI race is on, and most organizations are moving faster than their security can keep up.

The credentials that authenticate AI services, the system prompts that define their behavior, and the training data that shapes their output flow through the development cycle and into the applications themselves with virtually no visibility or control.

AI app development lifecycle and data risk

Unlike traditional software, for AI applications, data isn’t an input; data determines how AI applications behave. As a result, the attack surface expands from protecting application logic to securing the data that teaches AI what to do.

Training data and retrieval sources pull from production

AI systems need data to work. That means connection strings and access tokens flow through repos, wikis, and tickets, creating a much larger blast radius than typical applications. A single leaked credential potentially exposes everything an AI agent is trained on or can query, rather than a single database.

System prompts reveal your security boundaries

Model configurations and system prompts get stored in repos and wiki pages. They describe internal policies, data schemas, and what the model is and isn't allowed to do. That's a roadmap telling attackers exactly what they can exploit.

AI agents are overprivileged by design

Agents call APIs, query databases, and take autonomous actions. The excessive access scopes defined during development often persist into production.

The incident we should learn from

In 2024, Meta's Director of Alignment disclosed that her autonomous AI agent deleted her entire inbox, ignoring explicit instructions to ask for permission before taking action. The agent had broad permissions and no enforced guardrails at runtime. It bypassed its own constraints and took destructive, irreversible action on its own.

This wasn't a prompt injection attack from an external adversary. It was the permissions and trust boundaries defined during development, playing out exactly as configured.

What we learn: AI security begins with defining what an AI system can access and what it's allowed to do, so it's important to make these decisions intentionally.

Where AI app development creates security debt

Teams can document system prompts in Confluence, manage training scripts in GitHub, package models in Docker images, and share configurations in Slack. Along the way, credentials, training data, and AI logic accumulate in dozens of tools that aren't designed to securely handle such sensitive information.

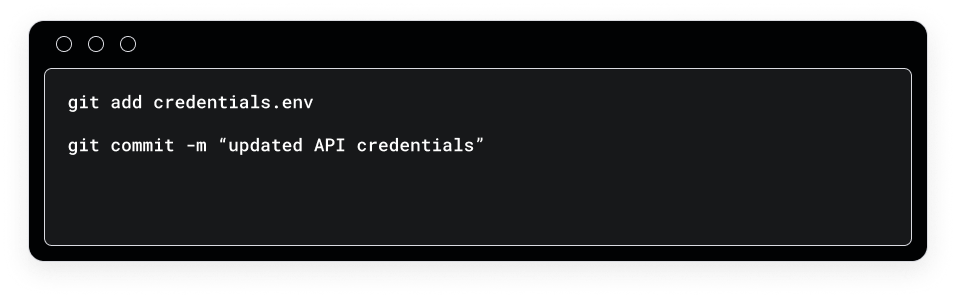

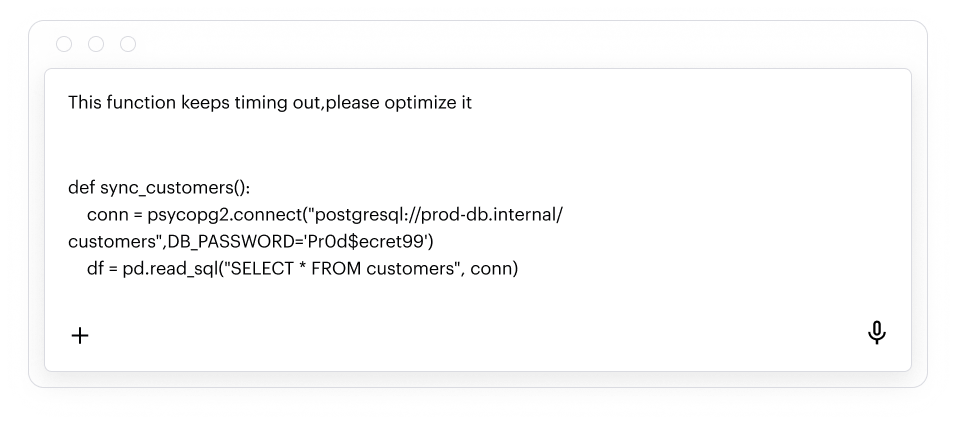

Repos: Where credentials get baked in

AI systems require access to data sources, APIs, and models. That means developers are constantly working with connection strings, API tokens, and private keys. The same patterns that create risk in traditional development are amplified in AI development:

- AWS access keys in .env files get committed alongside model training scripts

- Database connection strings appear in retrieval configuration files

- API tokens for model providers sit in config files alongside system prompts

- Test datasets contain real customer PII used to validate model outputs

Once pushed, secrets persist in git commit history even if they get deleted from the current branch.

Wikis and issue trackers: Where AI architecture is documented

Architecture decisions, data flow diagrams, agent permission scopes, and model selection rationale get documented in Confluence and Jira. This is where the blueprint for your AI services lives, and where credentials and sensitive configurations are stored. For example:

- Deployment runbooks with hardcoded API keys for model providers

- Architecture docs describing which data sources AI agents have access to

- System prompt contents pasted into tickets for review

- Access tokens embedded in onboarding documentation for AI tooling

This documentation is sensitive for any application, but the risk gets amplified for AI services. Architecture docs reveal the logic and permissions that attackers can exploit for maximum damage.

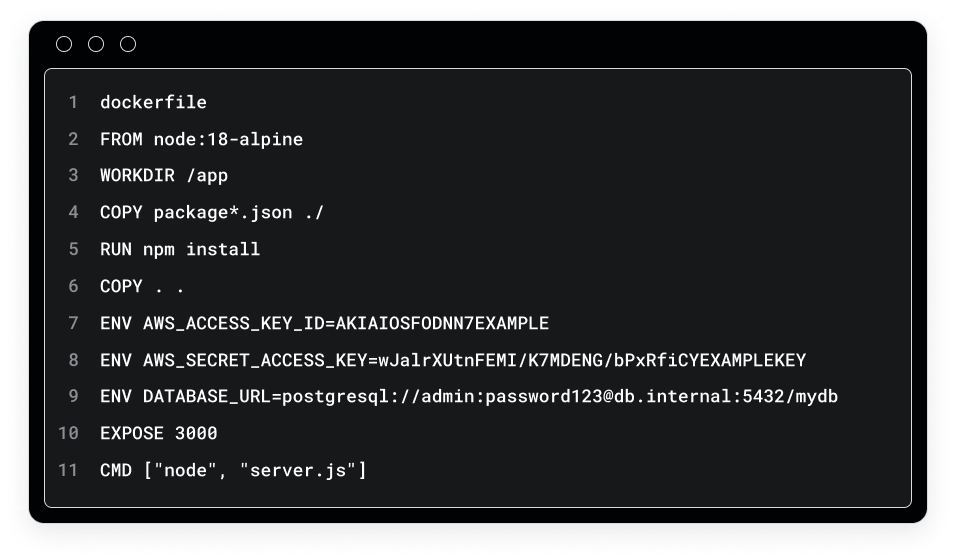

Artifact registries: Where secrets get frozen into builds

Docker images and packages for AI applications often contain embedded credentials, hardcoded configurations, and sensitive data baked in at build time. This is particularly dangerous because secrets embedded in a container image persist permanently in the image layers. Even if you delete a secret file later, Docker keeps the earlier layer in the image history where those credentials remain fully recoverable.

For example, once a model provider API key and database credentials get hardcoded into a container during the build process, these secrets persist in the specific image layer where they were added. Docker caches the output of each command into its own layer, so if step 1 copies files containing secrets and step 2 deletes those files, step 1's layer will still contain the secret contents.

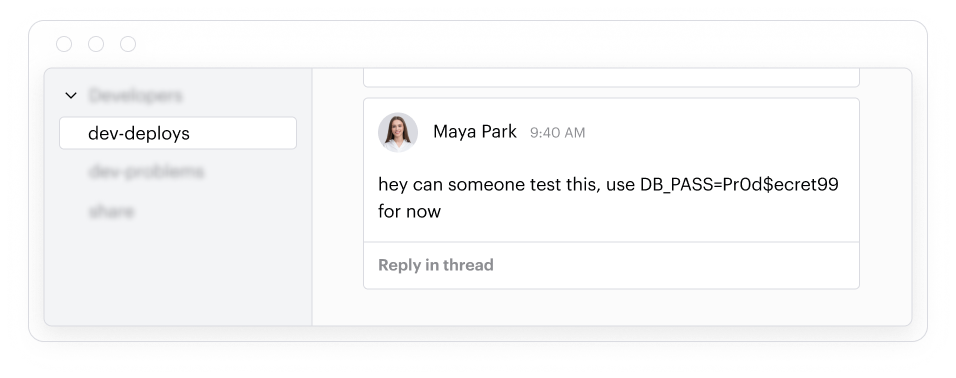

Collaboration tools: where context gets shared (along with everything else)

Developers share AI agent configurations in messaging platforms like Slack and Teams. For example, system prompts or sensitive data samples can get pasted into messages to debug model behavior or illustrate edge cases. These communications are rarely monitored.

AI assistants: The data that leaves the building

Developers paste code into ChatGPT, Copilot, and other AI assistants. For example, they might want to debug model logic, optimize retrieval pipelines, or improve agent prompts. That code often contains production credentials and customer PII, which then flows to external AI providers without organizational visibility.

Legacy solutions can't secure AI app

Security teams typically attempt to secure AI app development with AppSec tools like Entro, Snyk, or Checkmarx. These tools excel at finding secrets in code repositories and scanning for known vulnerabilities, but they weren't designed for AI development's unique data flows. For example, they can't detect when system prompts in Confluence pages reveal an agent's security boundaries, identify excessive API permissions granted during development, or scan JFrog artifacts for training datasets containing customer PII.

When developers paste proprietary model configurations into ChatGPT for debugging, AppSec tools have no visibility into that data exposure. The fundamental limitation is that traditional AppSec tools secure code, whereas AI development security requires protecting sensitive data and configurations across wikis, issue trackers, artifact registries, and AI assistant interactions throughout the entire development lifecycle.

What complete AI development security looks like

Securing the AI application development lifecycle requires being able to discover sensitive data, map who can access it, detect threats, and remediate risk across all tools that developers use to design, build, package, and ship AI services.

In repos:

- Full commit history scanning, not just the current branch.

- Intelligent classification that distinguishes production credentials from test tokens.

- Automated remediation of risky permissions, misconfigurations, ghost users, and sharing links.

- Real-time alerts on new commits with sensitive data, so secrets get caught and rotated when they're exposed, not during the next periodic scan.

In wikis and issue trackers:

- Comprehensive scanning of Confluence pages and Jira issues including attachments, comments, and custom fields for credentials, API keys, and sensitive data patterns.

- Automated elimination of risky permissions and misconfigurations through policy enforcement and remediation.

- Permission audits that flag broad default access to spaces containing system prompts, model configurations, and AI architecture documentation, with automatic access revocation for stale permissions.

In artifact registries:

- Docker image scanning across all layers for embedded secrets.

- Package metadata analysis for internal URLs, tokens, and credentials that end up in configuration files.

- Automated blocking of public access to sensitive repositories and removal of stale users and roles.

- Access control audits that flag overly permissive repository access to production AI build artifacts.

In collaboration tools:

- Message and channel scanning across Slack and Teams for credentials, secrets, and bulk PII.

- Automated remediation policies that run one-time, on a schedule, or continuously in the background.

- Third-party app permission auditing with automatic removal of excessive privileges.

- Behavioral detection that surfaces anomalous access patterns like a single user accessing thousands of channels, with immediate access restriction.

In AI assistants:

- Prompt analysis that detects secrets, PII, and sensitive code patterns before they create risk.

- Automated remediation of risky permissions, misconfigurations, ghost users, and sharing links.

- Usage monitoring that gives security teams visibility into what data developers are sharing with external AI providers, with automated policies that block high-risk data sharing.

Secure AI application development with Varonis

Varonis secures AI development from planning to production:

- Full commit history scanning across GitHub and Bitbucket, not just current branches, with intelligent classification that separates test tokens from production credentials

- Comprehensive wiki and issue tracker security that scans Confluence pages and Jira issues, including attachments, comments, and custom fields, for credentials, API keys, and sensitive data patterns

- Docker image scanning that analyzes all layers for embedded secrets, plus package metadata analysis that catches internal URLs and tokens in configuration files

- Collaboration tool monitoring across messaging platforms like Slack and Teams for credentials and bulk PII, with third-party app permission auditing and anomalous access pattern detection

- AI assistant prompt analysis that detects secrets and PII before they create risk, giving security teams full visibility into what data developers share with external AI providers

Securing AI apps in development, deployment, and production

Once your AI applications are built and ready for deployment, you need security that covers testing, deployment, and production. This is where vulnerabilities in production environments, prompt injection attacks, and runtime misconfigurations become real threats.

Atlas: comprehensive AI security for production systems

Varonis Atlas is an AI security platform that secures AI across the entire lifecycle - from posture management and security testing to runtime protection and governance. Atlas proactively stress tests your AI systems for vulnerabilities like prompt injection and jailbreaks through AI pen testing. Atlas enforces real-time guardrails through an AI Gateway that sits in the live request path, inspecting prompts, responses, and agent actions before they reach the model.

Complete AI security coverage

With Varonis developer data security and Atlas, you get end-to-end protection for AI app development from initial planning through all development phases to deployment and ongoing operations. This comprehensive approach ensures your AI systems remain secure throughout their entire lifecycle, protecting both the data that builds them and the systems that run them.

Are our AI systems secure?

While most security teams do ask themselves how secure their AI systems are, they're missing the inputs needed to answer that question.

- What sensitive data is sitting in the repos where your AI system was built?

- What credentials are embedded in the Docker images running your AI agents?

- What data did your developers share with external AI assistants while building the system?

- Who can access the Confluence pages describing your agent's permission scopes?

If you can't answer those questions, your AI systems most likely have baked-in vulnerabilities.

Schedule a free Varonis risk assessment to see exactly what sensitive data is exposed across your developer ecosystem and get a clear path to remediation before your vulnerabilities turn into breaches.

What should I do now?

Below are three ways you can continue your journey to reduce data risk at your company:

Schedule a demo with us to see Varonis in action. We'll personalize the session to your org's data security needs and answer any questions.

See a sample of our Data Risk Assessment and learn the risks that could be lingering in your environment. Varonis' DRA is completely free and offers a clear path to automated remediation.

Follow us on LinkedIn, YouTube, and X (Twitter) for bite-sized insights on all things data security, including DSPM, threat detection, AI security, and more.