On April 19, 2026, Vercel, a cloud platform used by hundreds of thousands of organizations to deploy and host web applications, disclosed a security breach of its internal systems.

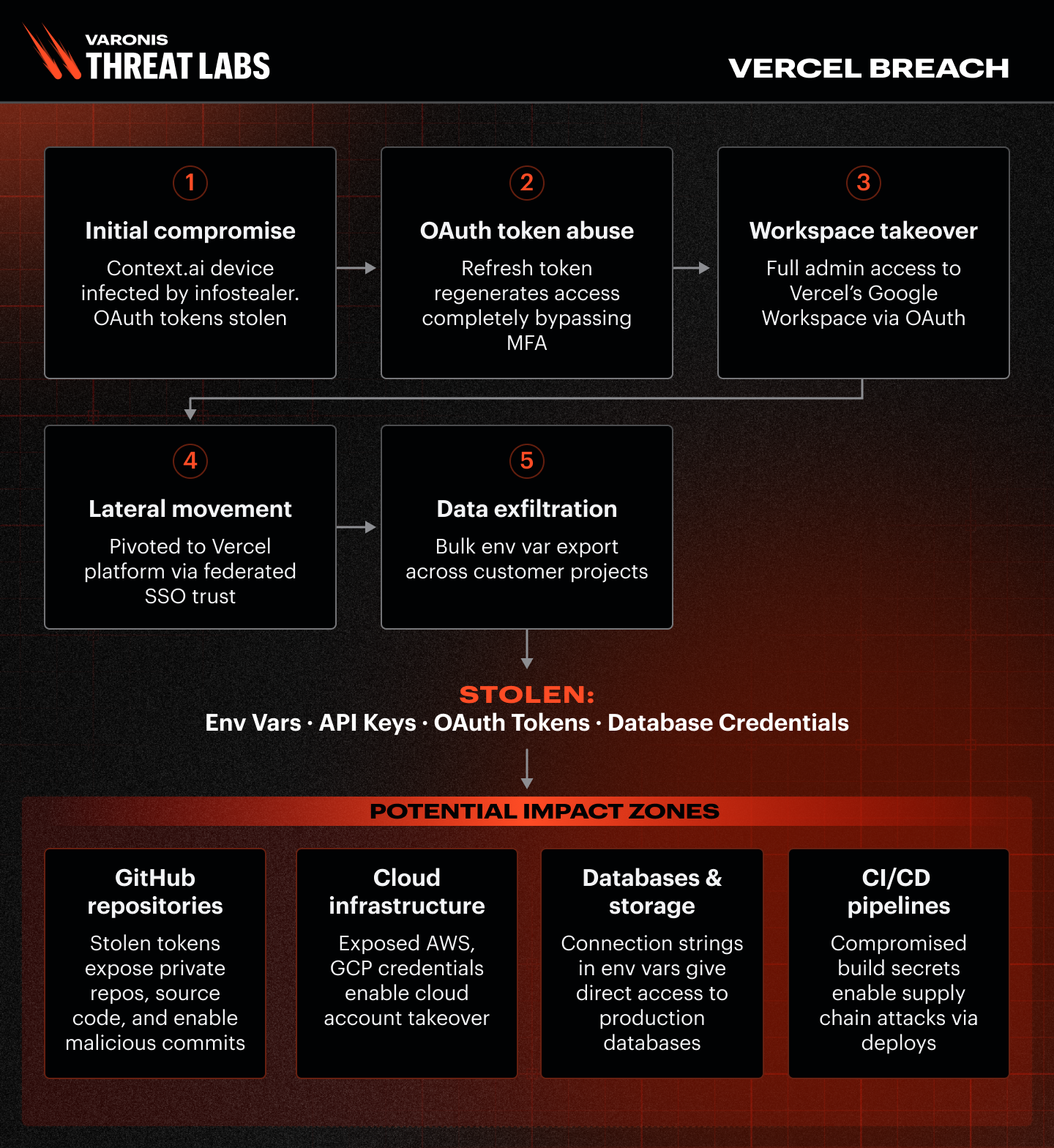

The attack began in Context.ai, a small AI productivity tool used by a Vercel employee. The tool was compromised, and the attacker used it as a stepping stone:

- Context.ai was infected with infostealer malware, which stole the app’s authentication credentials.

- The attacker used those credentials to silently access the Vercel employee’s Google Workspace account — bypassing multifactor authentication entirely, because OAuth tokens, once issued, do not require re-authentication.

- Via Google single sign-on, the attacker moved into Vercel’s internal systems — issue trackers, admin tools, and internal environments.

- The attacker then bulk-extracted environment variables from Vercel customer projects: the secrets and credentials companies store in Vercel to make their applications work.

The threat actor — believed to be ShinyHunters, a known cybercriminal group — is selling the stolen data for $2 million on underground forums.

Why this matters

Vercel stores the operational secrets of every application it deploys. If your organization uses Vercel, there is a significant chance that credentials stored in your Vercel environment were exposed. These credentials typically include:

- Cloud access keys (AWS, Azure, GCP), which provide direct access to your infrastructure, data storage, and internal services

- Database credentials, which provide direct access to your customer data, PII, and financial records

- GitHub tokens, which provide access to your source code and the ability to deploy code to your production applications

- Payment and third-party API keys, Stripe, Twilio, SendGrid, and similar services

Critically, this is not just a Vercel problem. If any of these credentials were stolen, an attacker could use them to access your systems — completely independently of Vercel. A stolen AWS key, for example, works against your AWS account regardless of how it was obtained.

What to do now

Immediately

- Check whether your organization uses Context.ai. Go to

admin.google.com → Security → API Controls → Third-Party App Access

and search for

Client ID 110671459871-30f1spbu0hptbs60cb4vsmv79i7bbvqj.apps.googleusercontent.com

If found, revoke access immediately. - Rotate every secret stored in Vercel environment variables. Treat them all as compromised. Start with cloud credentials (AWS, Azure, GCP), then database passwords, then GitHub tokens, then everything else.

- Check your cloud provider logs (AWS CloudTrail, Azure Activity Log, GCP Audit Logs) for any unusual activity in the past 30 days from credentials associated with Vercel deployments.

- Check GitHub for unexpected webhooks, new deploy keys, or unfamiliar OAuth applications connected to your organization.

- Review recent Vercel deployments to confirm they were all triggered by your team.

Over the next two weeks

- Mark all secrets in Vercel as “Sensitive” (a Vercel setting that prevents credentials from being readable through the admin interface).

- Audit which AI tools and third-party applications have broad access to your team’s Google or Microsoft accounts and revoke any that are not business-critical.

- Ensure cloud service accounts used by Vercel have only the permissions they actually need, not broad access to your entire infrastructure.

The bigger picture

The larger trend is clear: AI productivity tools are the new supply chain attack vector. These tools require broad access to email, documents, and identity systems to function — and most organizations have not established governance programs to track or control those permissions. A compromise at a small AI vendor can cascade into breaches at many enterprises.

Why third-party AI tools increase enterprise risk

The Vercel incident highlights a high-impact risk pattern: organizations increasingly rely on platforms like Vercel to orchestrate the entire software delivery lifecycle — builds, CI/CD pipelines, preview environments, and production deployments. When employees connect third-party AI tools into corporate identity and productivity suites, they extend the trust boundary to that vendor. If that AI vendor (or its OAuth tokens) is compromised, the attacker can use the stolen access to pivot into the very systems that control how code is built and shipped.

That matters because a compromise of a deployment platform is rarely contained. From Vercel (or any similar orchestration layer), an attacker may be able to read or modify build settings, add malicious build steps, trigger deployments, and extract environment variables — which commonly include cloud keys, database credentials, signing secrets, and source control tokens. In other words, a third-party AI tool compromise can become an end-to-end supply-chain attack: from OAuth access, to CI/CD control, to production infrastructure and data. The takeaway: treat AI app integrations as potential entry points to your delivery pipeline, enforce least-privilege scopes, monitor OAuth grants continuously, and be ready to rotate the secrets your CI/CD platform can access.

How Varonis can help

Varonis monitors GitHub, AWS, Azure, GCP, and other platforms in real time. When a stolen credential is used anomalously — from an unexpected location, accessing unusual data — Varonis alerts immediately and shows exactly what data was accessed, enabling rapid response and accurate breach scoping. In addition, our MDDR specialists are monitoring your environments 24/7 and will proactively alert if something suspicious happens.

If you would like a free assessment of your exposure across these platforms, contact your Varonis representative or visit varonis.com/request-demo.

What should I do now?

Below are three ways you can continue your journey to reduce data risk at your company:

Schedule a demo with us to see Varonis in action. We'll personalize the session to your org's data security needs and answer any questions.

See a sample of our Data Risk Assessment and learn the risks that could be lingering in your environment. Varonis' DRA is completely free and offers a clear path to automated remediation.

Follow us on LinkedIn, YouTube, and X (Twitter) for bite-sized insights on all things data security, including DSPM, threat detection, AI security, and more.

.png)