As artificial intelligence becomes increasingly central to enterprise operations, the need for robust governance frameworks has never been greater. ISO/IEC 42001, the first international standard for AI management systems (AIMS), provides organizations with a structured approach to managing AI risks across the entire AI lifecycle. However, achieving and sustaining certification can be daunting without the right tools and processes.

Enter Varonis Atlas — a platform purpose-built to operationalize ISO/IEC 42001 at scale, delivering the technical controls, evidence, and continuous monitoring required for effective AI governance.

What is an AIMS for ISO 42001 compliance?

ISO/IEC 42001 defines an Artificial Intelligence Management System (AIMS) as a coordinated system of people, processes, and supporting technologies, designed to manage risk throughout the AI lifecycle. Implementing an AIMS isn't just about deploying new technology; it requires organizational commitment, clear processes, and ongoing oversight. The standard lays out comprehensive requirements—from inventorying AI systems and managing risk, to ensuring leadership accountability and facilitating continuous improvement.

How Varonis Atlas maps directly to ISO/IEC 42001 requirements

Varonis Atlas stands out by addressing ISO/IEC 42001 requirements both directly and indirectly. Direct contributions come in the form of technical controls and system behaviors explicitly required by the standard. Indirectly, Atlas empowers organizations to execute governance processes led by people and policies, creating a bridge between technology and enterprise-wide accountability.

Below is a breakdown of how Atlas achieves these ends with the support of organizational policies and talent.

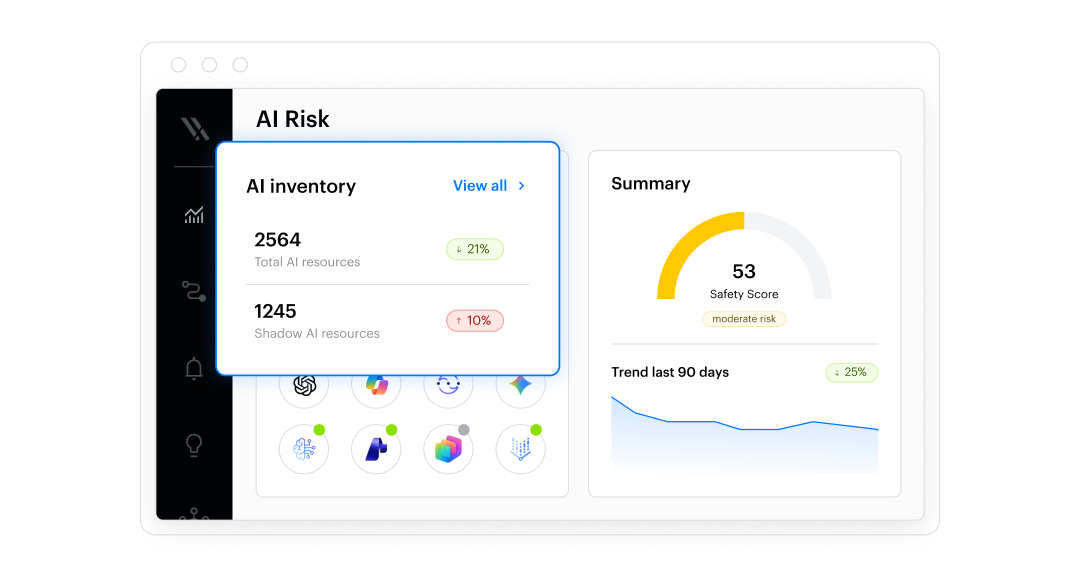

Comprehensive AI inventory and scope definition

One of the foundational steps in ISO/IEC 42001 is defining the scope of the AIMS by identifying which AI systems are subject to governance. Varonis Atlas automates this process by continuously discovering and inventorying all AI systems — whether sanctioned, custom, embedded, or shadow AI. The platform scans cloud environments, code repositories, AI services, and agentic frameworks, ensuring that nothing falls through the cracks. This dynamic inventory allows organizations to maintain an accurate and defensible scope, essential for risk management and audit readiness.

AI risk identification and technical controls

ISO/IEC 42001 mandates ongoing identification and management of AI-specific risks. Atlas rises to the challenge with advanced AI Security Posture Management (AI-SPM), continuously assessing systems for vulnerabilities, misconfigurations, and data exposure. It combines static analysis with dynamic adversarial testing, including penetration testing for large language model (LLM) endpoints and model artifact scanning. This proactive approach uncovers issues such as prompt injection or excessive agent privileges before they can be exploited, and documents findings in structured, auditable reports.

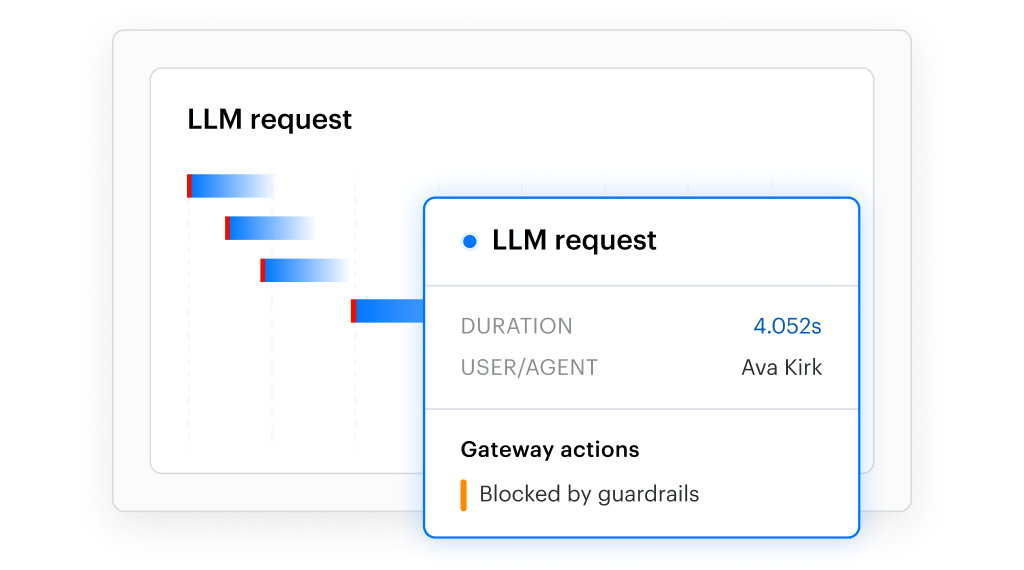

Real-time monitoring, logging, and incident response

Many AI risks only become apparent at runtime. Atlas addresses this with a robust observability architecture that captures comprehensive telemetry — prompts, responses, agent actions, tool calls, and policy enforcement events — across production environments. These immutable logs are stored in customer-controlled environments, ensuring data integrity and regulatory compliance. Integration with incident management workflows allows organizations to escalate and respond to significant AI events seamlessly, supporting the review and oversight required by ISO/IEC 42001.

Evidence collection and continuous compliance

ISO/IEC 42001 emphasizes demonstrable compliance. Varonis Atlas transforms technical telemetry into structured, audit-ready evidence by mapping standard requirements to system-generated artifacts, including AI inventories, risk findings, runtime logs, and uploaded policies. Automated workflows guide users through risk assessments and reviews, reducing the manual effort required to collect and correlate evidence. This ensures compliance becomes an ongoing operational practice rather than a one-time exercise.

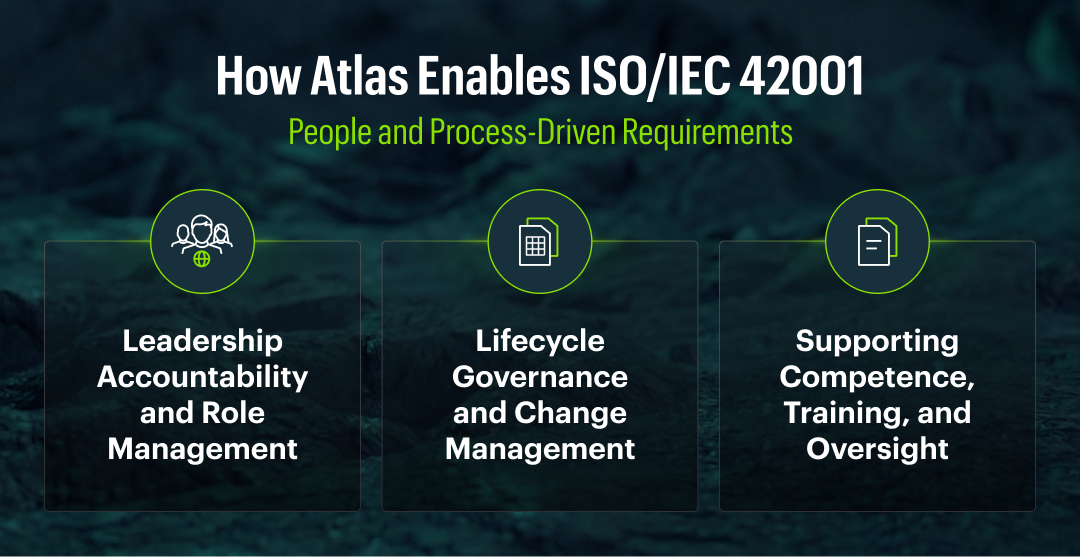

Enabling people and process-driven AIMS requirements

No technology can replace the human elements of oversight, leadership, and organizational process. However, Atlas empowers people and processes to be more effective, transparent, and auditable.

Leadership accountability and role management

ISO/IEC 42001 requires clear leadership accountability and defined responsibilities. Atlas provides executive-level visibility into AI risk posture, incidents, and compliance evidence through intuitive dashboards and reporting. Role-based access controls, project scoping, and action traceability ensure that duties are clearly separated among developers, security teams, compliance officers, and auditors — enabling transparency and effective oversight without displacing executive ownership.

Lifecycle governance and change management

Governance doesn’t end after deployment. Atlas provides continuous visibility throughout the AI system lifecycle, detecting changes in models, dependencies, and risk posture. Automatic triggers for reassessment workflows ensure that lifecycle governance remains informed by real system behavior rather than static documentation.

Supporting competence, training, and human oversight

To comply with ISO/IEC 42001, organizations must ensure that personnel are competent and that meaningful human oversight is maintained. Atlas enables this by making AI risks, behaviors, and policy enforcement visible through dashboards and review workflows. Human oversight actions are recorded as auditable evidence, reinforcing — not replacing — the human judgment required by the standard.

Operationalizing trustworthy AI governance with Varonis Atlas

ISO/IEC 42001 makes clear that trustworthy AI governance is not about isolated policies or one-off technical solutions; it's about building an operational management system that can evolve alongside your AI initiatives.

Varonis Atlas provides the technical controls, continuous visibility, and audit-ready evidence necessary for an effective AIMS at enterprise scale. By aligning advanced technology with people and process-driven governance, Atlas helps organizations move from aspirational AI compliance to practical, defensible, and repeatable operations.

Ready to see how Varonis Atlas can jumpstart your ISO/IEC 42001 journey? Connect with our team and experience a proof of value tailored to your organization’s unique needs.

Disclaimer: Atlas does not replace an organization’s AIMS, nor does it claim to independently establish or certify ISO 42001 compliance.

Appendix: Mapping Varonis Atlas to ISO 42001

|

ISO/IEC 42001 Clause |

Atlas Capability |

Customer Responsibility |

|

Clause 4.1 – Understanding the organization and its context |

Provides visibility into how AI systems are deployed, connected, and used across the environment, enabling organizations to understand technical AI exposure within their operational context. |

Define organizational context, regulatory obligations, risk tolerance, and business objectives that shape the AIMS. |

|

Clause 4.3 – Determining the scope of the AIMS |

Continuously discovers and inventories AI systems, models, agents, tools, dependencies, and shadow AI to support the accurate definition of the AIMS scope. |

Formally define and approve the scope of the AIMS, including which AI systems are in scope and why. |

|

Clause 5.1 – Leadership and commitment |

Provides executive‑level visibility into AI risk posture, incidents, and compliance evidence through dashboards and reports. |

Establish leadership accountability, approve AI policies, and demonstrate commitment to AI governance. |

|

Clause 5.3 – Organizational roles, responsibilities, and authorities |

Supports role‑based access, project scoping, and traceability of actions taken within AI governance workflows. |

Assign roles, responsibilities, and decision‑making authority for AI governance across the organization. |

|

Clause 6.1 – Actions to address risks and opportunities |

Identifies AI‑specific technical risks through posture management, scanning, and testing; generates structured risk findings. |

Determine risk acceptance criteria, approve mitigation strategies, and document risk treatment decisions. |

|

Clause 7.2 – Competence |

Makes AI system behavior and risk visible to support informed human oversight and review. |

Ensure personnel have appropriate training, skills, and competence to manage and oversee AI systems. |

|

Clause 7.3 – Awareness |

Provides ongoing visibility into AI usage, risk trends, and policy violations, supporting awareness across teams. |

Establish training, awareness programs, and communication related to AI governance obligations. |

|

Clause 8.1 – Operational planning and control |

Enables lifecycle visibility across development, deployment, and runtime through inventory, change detection, and monitoring. |

Define and enforce operational processes governing AI system design, deployment, change, and retirement. |

|

Clause 8.2 – AI risk management |

Implements continuous technical risk identification through AI‑SPM, pen testing, and runtime analysis. |

Perform formal risk assessments, approve controls, and integrate AI risk management into enterprise risk processes. |

|

Clause 8.3 – AI system lifecycle management |

Detects changes to AI systems, dependencies, and behavior that may require reassessment or review. |

Maintain lifecycle governance policies, approval gates, and documentation for AI systems. |

|

Clause 8.4 – Monitoring of AI systems |

Captures runtime telemetry, guardrail enforcement events, and AI activity logs in auditable, immutable records. |

Define monitoring objectives, escalation criteria, and oversight processes for AI system operation. |

|

Clause 9.1 – Monitoring, measurement, analysis, and evaluation |

Provides measurable AI risk indicators, compliance status, and operational metrics derived from system activity. |

Evaluate the effectiveness of the AIMS and determine whether objectives and controls are being met. |

|

Clause 9.2 – Internal audit |

Generates structured, evidence‑based reports aligned to ISO/IEC 42001 requirements. |

Plan and conduct internal audits and ensure independence of audit activities. |

|

Clause 9.3 – Management review |

Aggregates technical evidence and risk insights to support management review inputs. |

Conduct management reviews and make decisions on AIMS improvements and direction. |

|

Clause 10 – Improvement |

Tracks recurring issues, risk trends, and remediation outcomes over time. |

Drive continual improvement of the AIMS based on audit results, incidents, and performance reviews. |

What should I do now?

Below are three ways you can continue your journey to reduce data risk at your company:

Schedule a demo with us to see Varonis in action. We'll personalize the session to your org's data security needs and answer any questions.

See a sample of our Data Risk Assessment and learn the risks that could be lingering in your environment. Varonis' DRA is completely free and offers a clear path to automated remediation.

Follow us on LinkedIn, YouTube, and X (Twitter) for bite-sized insights on all things data security, including DSPM, threat detection, AI security, and more.